This piece first appeared on Sensible Med on 5/3/2023.

ABMS board certification is not voluntary (despite ABMS member board claims), especially as specialization in medicine is the norm today. And thanks to regulatory capture, physicians who work at ACGME-accredited medical training hospitals in particular must pay for and participate in MOC before funds can be devoted to personally directed continuing professional development (CPD) products provided by others.

Forced participation in MOC and the harms it imposed on physicians have resulted in multiple federal class action antitrust lawsuits being filed against different ABMS member boards beginning in late 2018. These include Kenney v. ABIM (2:18-cv-05260), Siva v. American Board of Radiology (1:19-cv-01407), and Lazarou v. American Board of Psychiatry and Neurology (1:19-cv-01614). Kenney was filed in Philadelphia, PA while the Siva and Lazarou cases were filed in Chicago, IL. Here’s how this non-lawyer physician interprets what has occurred with these cases so far.

Background: Antitrust Law for Dummies

In layman’s terms, federal law prohibits any agreement that creates an unreasonable restraint of trade. One type of restraint of trade is when a seller creates a “tie” by forcing a buyer to purchase separate products together. Here, certification is separate from MOC, and physicians are forced to buy MOC because if they do not their certifications are revoked.

Kenney v. ABIM (2:18-cv-05260)

In Kenney, ABIM argued that MOC was merely a component or modification of certification, and that the two were one product and not separate. Plaintiff argued that ABIM’s analysis failed to examine the two products before MOC was implemented. The court sided with ABIM.

Siva v American Board of Radiology (1:19-cv-01407)

The American Board of Radiology used the same argument as Kenny that certification and MOC were not separate products. The lower court agreed. But the appeals court found (page 12) that certification and MOC were, in fact, separate products:

Nonetheless, the appeals court upheld the dismissal of plaintiff’s claims because it did not feel MOC was a substitute for other CPD products. This argument was new and had not been addressed before by either the lower court or the parties.

Lazarou v American Board of Psychiatry and Neurology (1:19-cv-01614)

The lower court recently requested that the Lazarou parties file a brief addressing the impact of the Siva opinion. Plaintiffs explained how Siva had found certification and MOC to be separate products, and described in detail how MOC was indeed a CPD product, and that MOC and other CPD products are substitutes for and interchangeable with each other. Whether the Lazarou case can go forward now lies in the judge’s hands.

Implications of MOC

Regardless of how the judges have ruled or will rule in these cases, MOC has already had far-reaching effects on the US health care system. In my opinion, the MOC story reflects a remarkable betrayal of working physicians by other physicians for one reason: greed. The physician-sycophants who head these organizations have become incredibly wealthy at working physicians’ expense and failed to actively deal with their organizations’ numerous conflicts of interest permitted by their very own bylaws. Physicians are leaving our profession in droves as they are gaslighted, no longer feel valued, and are forced to buy MOC to remain privileged at hospitals, receive insurance payments, and lower their malpractice costs, despite no credible proof that MOC improves patient care or safety.

Worse, countless hours of patient care have been wasted on test preparation and performing worthless data entry exercises. To have never considered that thousands of patients suffer as a result of MOC is bizarre – unless, of course, patients are of no real concern to those that impose the extortionate MOC program.

It is disturbing when organizations like the Physician Consortium for Performance Improvement (ThePCPI.org) with the same address as the American Board of Medical Specialties, magically disappear from the internet when their collaboration with the medical industry is exposed. Thanks to the digitization if health care, MOC tears a playbook sheet directly from the old AMA CPT-coding and Facebook-Cambridge Analytica playbook: data make you rich and can advance a political agenda.

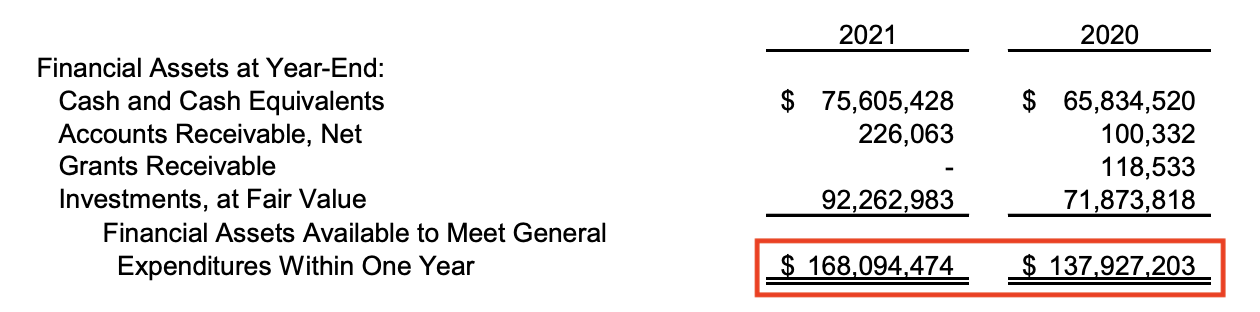

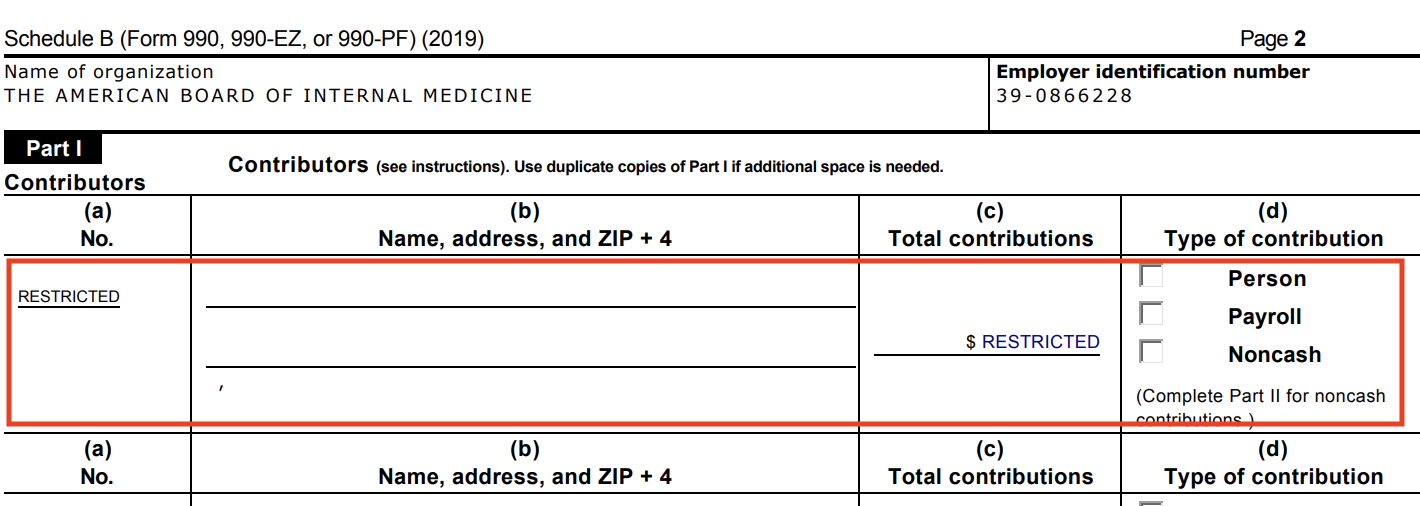

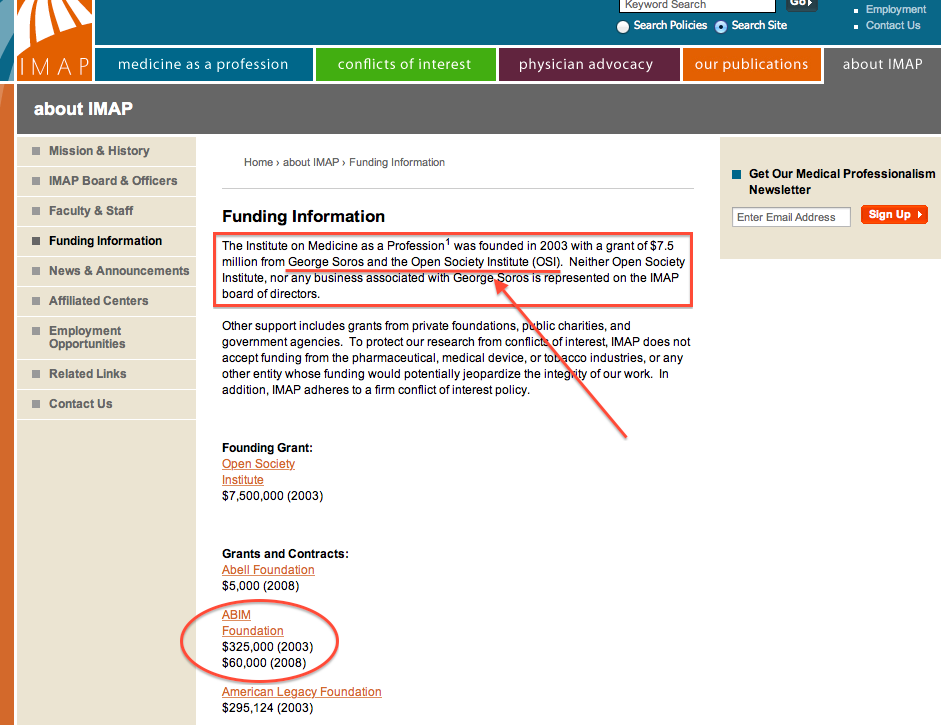

Being slippery about MOC’s purpose voids any semblance of trust by physicians in our health care system. Moral injury is playing a large role in physicians’ disillusionment. All the cute websites in the world called “BuildingTrust.org” made by the ABIM Foundation that secretly re-purposed tens of millions of dollars from physicians for political and retirement fund purposes certainly doesn’t help. Rather, it is a sick example of how low medicine has stooped to transfer working physicians’ forced-MOC fees to others in return for political favors.

Worst of all perhaps, in a clear and present danger to all patients, MOC promotes the silencing of scientific debate. ABIM, American Board of Pediatrics, and American Board of Family Medicine recently issued a statement with the Federation of State Medical Boards that threatens revocation of certification and even state licensure as a cudgel to quell anything they consider “dissemination of misinformation” by physicians. Meanwhile, Orwell and Semmelweis are rolling in their graves.

All these activities and wasteful spending make me wonder if certification should be continued at all. Our bureaucratic House of Medicine has lost its way. The drive for money and political influence have become so dire that even our own medical specialty societies are now partnering with the ruse.

As I near retirement, I have a choice: use my forty-plus years of medical experience to help train and teach my younger colleagues, continue to care for patients, and pay up and shut up to perpetuate the ruse, or quit medicine to avoid another round of extortion to remain certified in order to practice at the hospital system I have served for 22 years.

I wish I didn’t have to make such a decision. Medicine is what I do. My patients are what matters, not the ABMS member boards’ retirement funds.

I serve in the trenches with some of the most sterling, heroic physicians I’ve ever had the privilege to work with. I am sure you do, too. I’d just like our regulatory bodies to have the modicum of honesty and credibility that we deserve.

-Wes